Making sense of data using AI is becoming crucial to our daily lives and has significantly shaped my professional career in the last 5 years.

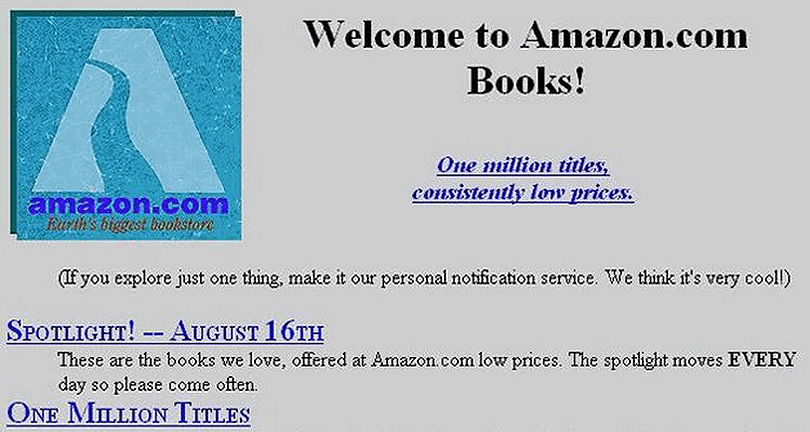

When I began working on the Web it was in the mid-nineties and Amazon was still a bookseller with a primitive website.

At that time it became extremely clear that the world was about to change and every single aspect of our society in both cultural and economic terms was going to be radically transformed by the information society. I was in my twenties, eager to make a revolution and the Internet became my natural playground. I dropped out of school and worked day and night contributing to the Web of today.

Twenty years after I am witnessing again to a similar – if not even more radical – transformation of our society as we race for the so-called AI transformation. This basically means applying machine learning, ontologies and knowledge graphs to optimize every process of our daily lives.

At the personal level I am back in my twenties ? (sort of) and I wake up at night to train a new model, to read the latest research paper on recurrent neural networks or to test how deep learning can be used to perform tasks on knowledge graphs.

The beauty of it is that I have the same feeling of building the plane as we’re flying it that I had in the mid-nineties when I started with TCP/IP, HTML and websites!

AI transformation for search engine optimization

In practical terms, the AI transformation here at WordLift (our SEO startup) works this way: we look at how we help companies improve traffic coming from search engines. We analyze complex tasks and break them down into small chunks of work and we try to automate them using narrow AI techniques (in some cases we simply tap at the top of the AI pyramid and start using ready-made APIs, in some other cases we develop/train our own models). We tend to focus (in this phase at least) to trivial repetitive tasks that can bring a concrete and measurable impact on the SEO of a website (i.e. more visits from Google, more engaged users, …) such as:

- Image captioning for image SEO optimization,

- Automatic text summarization to add missing meta descriptions,

- Unsupervised clustering for search queries analysis,

- Semantic textual similarity for title tag optimization,

- Text classification to organize content on existing websites,

- NLP for entity extraction to automate structured data markup

- NLP for text generation to help you create a keyword suggestion tool

- …and a lot more coming.

We test these approaches on a selected number of terrific clients that literally fuel this process, we keep on iterating and improving the tooling we use until we feel ready to add it back into our product to make it available to hundreds of other users.

All along the journey, I’ve learned the following lessons:

1. The AI stack is constantly evolving

AI introduces a completely new paradigm: from teaching computers what to do, to providing the data required for computers to learn what to do.

In this pivotal change, we still lack the infrastructure required to address fundamental problems (i.e. How do I debug a model? How can I prevent/detect a bias in the system? How can I predict an event in the context in which the future is not a mere projection of the past?). This basically means that new programming languages will emerge and new stacks shall be designed to address these issues right from the beginning. In this continuing evolving scenario libraries like TensorFlow Hub represent a concrete and valuable example of how the consumption of reusable parts in AI and machine learning can be achieved. This approach also greatly improves the accessibility of these technologies by a growing number of people outside the AI community.

2. Semantic data is king

AI depends on data and any business that wants to implement AI inevitably ends up re-vamping and/or building a data pipeline: the way in which the data is sourced, collected, cleaned, processed, stored, secured and managed. In machine learning, we no longer use if-then-else rules to instruct the computer but we instead let the computer learn the rules by providing a training set of data. This approach, while extremely effective, poses several issues as there is no way to explain why a computer has learned a specific behavior from the training data. In Semantic AI, knowledge graphs are used to collect and manage the training data, and this allows us to check the consistency of this data and to understand, more easily, how the network is behaving and where we might have a margin for improvement. Real-world entities and the relationships between them are becoming essential building blocks in the third era of computing. Knowledge graphs are also great in “translating” insights and wisdom from domain experts in a computable form that machine can understand.

3. You need the help of subject-matter experts

Knowledge becomes a business asset when it is properly collected, encoded, enriched and managed. Any AI project you might have in mind always starts with a domain expert providing the right keys to address the problem. In a way, AI is the most human-dependent technology of all times. For example, let’s say that you want to improve your SEO for images on your website. You will start by looking at best practices and direct experiences of professional SEOs that have been dealing with this issue for years. It is only through the analysis of the methods that this expert community would use that you can tackle the problem and implement your AI strategy. Domain experts know, clearly in advance, what can be automated and what are the expected results from this automation. A data analyst or an ML developer would think that we can train an LSTM network to write all the meta-descriptions of a website on-the-fly. A domain expert would tell you that Google only uses meta descriptions 33% of the times as search snippets and that, if these texts are not revised by a qualified human, they will produce little or no results in terms of actual clicks (we can provide a decent summary with NLP and automatic text summarization but enticing a click is a different challenge).

4. Always link data with other data

External data linked with internal data helps you improve how the computer will learn about the world you live in. Rarely an organization controls all the data that an ML algorithm would need to become useful and to have a concrete business impact. By building on top of the Semantic Web and Linked Data, and by connecting internal with external data we can help machines get smarter. When we started designing the new WordLift’s dashboard whose goal is to help editors improve their editorial plan by looking at how their content ranks on Google, it immediately became clear that our entity-centric world would have benefited from query and ranking data gathered by our partner WooRank. The combination of these two pieces of information helped us create the basis for training an agent that will recommend editors what is good to write and if they are connecting with the right audience over organic search.

Conclusions

To shape your AI strategy and improve both technical and organizational measures we need to study carefully the business requirements with the support of a domain expert and remember that, narrow AI helps us build agentive systems that do things for end-users (like, say, tagging images automatically or building a knowledge graph from your blog posts) as long as we always keep the user at the center of the process.

Wanna learn more? Find out how to Improve your organic Click-Through rate with Machine Learning!