Project Claw 🦞: Field Notes From Agentic Work

What happens when an AI agent becomes part of the team’s daily workflow? The story is not about full automation, but about how teams learn to work with agents.

At WordLift, we are building AI agents for the work we already do.

That changes the relationship with the technology.

We are not observing agents from a distance. We are not building a demo for a conference and then imagining how people might use it later. We are putting Claw inside the daily rhythm of the team: in Slack threads, in reporting workflows, in client conversations, in SEO analysis, and in the moments where something breaks and needs to be fixed.

This is the story of WordLift Claw Bot 🦞 (watch the video here to learn more): our Slack-based agent for SEO, AI Search, Knowledge Graph analysis, Signal Graph monitoring, and causal impact reporting.

Claw can call tools. It can retrieve data. It can use GraphQL. It can query Search Console. It can generate reports. It can produce artifacts. It can explain sources. It can compare variant and control groups.

But that is not the most interesting part.

The interesting part is what happens around the bot.

People question it. They correct it. They challenge its assumptions. They compare its output with previous manual work. They ask where the data comes from. They wait for it. They get frustrated when it fails. They encourage it to continue.

The agent is not simply automating work.

It is changing the social shape of work.

The Setup

Over the last weeks, I have been looking at how the team uses WordLift Claw Bot in real Slack threads.

Not polished prompts.

Not idealized workflows.

Real work.

In one thread, a specialist corrects the intervention date and offers the real variant and control lists. In another, I ask the bot to use GraphQL to identify the URLs where WordLift is present and Search Console to identify a cleaner control group.

A few minutes later, I ask the question that matters most:

Are we sure the control group is comparable before the intervention?

That question changes the task. The bot is no longer just producing a headline number. It has to validate the causal design.

Then the pattern expands.

Someone asks what access the bot has. That becomes a new kind of onboarding: not a product tour, but a map of what Claw can actually see and do. Someone else asks it to align marketing and sales by identifying low-hanging fruits across organic and paid. I ask it to analyze a ranking entity page and move from queries, fan-out, and competition into a first editorial rewrite.

This is when the agent starts to look less like a reporting assistant and more like an operating layer.

The Findings

The first thing we learned is that the agent becomes useful before it becomes fully reliable.

The second thing we learned is that adoption changes when the agent leaves the private workspace and enters a shared channel with a client.

Inside the team, the agent is allowed to be rough. It can fail, rerun, and be corrected. In a client-facing space, the bar changes. The output has to be clear, grounded, and immediately useful.

That transition matters.

It is the moment where an internal tool starts becoming part of service delivery.

Client Trust Is A Different Kind Of Test

The first time I brought WordLift Claw Bot into a shared client channel, the task was intentionally concrete.

I did not ask it to make a grand strategic claim. I asked it to check whether a newly published hub page was already gaining traction from Search Console or Analytics.

That choice matters.

When you introduce an agent into a client-facing space, you do not start with the most ambitious workflow. You start with a small, verifiable question.

The useful answer was not hype.

The page had moved from zero impressions to early visibility. Clicks were not there yet. Analytics showed a small number of engaged sessions. No AI referral traffic was visible yet.

The most important part is that the bot did not overclaim.

No AI referral traffic found.

That restraint is part of trust.

In a market where everyone wants to attach every movement to AI, the agent became more credible by separating what was visible in Search Console and Analytics from what was not yet visible in AI referrals.

Then, in a private chat, the client said:

That sounds pretty solid.

That sentence matters.

It is not a testimonial. It is a threshold.

The agent had produced something good enough to be part of a client conversation. Not final enough to replace expert work. Not polished enough to become a fully automated deliverable. But solid enough to create confidence, keep the conversation moving, and turn raw data into a next action.

The Human Layer

Every internal agent develops its own folklore.

Here, the folklore is made of small lines.

What Changed In The Team

Before

- Open tools

- Export data

- Find URLs

- Separate variant and control

- Check dates

- Build charts

- Prepare the report

- Move insights manually into content, sales, or client work

With Claw

- A team member asks

- Claw maps its access

- The bot runs

- Someone corrects

- The bot reruns

- Someone validates

- The output becomes a report, a strategy, or a draft

- The workflow improves

Before Claw, the work moved from tool to tool. With Claw, the work starts as a conversation and becomes executable.

The important shift is not that humans disappear.

The shift is that coordination, validation, and production begin to happen in the same place.

What Changed In Me

I am not only observing the team using WordLift Claw Bot 🦞. I am also building it, shaping the tasks, testing the workflows, and using it myself.

As a builder, I see the system architecture.

As a user, I feel the friction.

As a CEO, I see the organizational pattern and the deeper implications of digital transformation.

As an SEO, I care about the methodology.

As a product person, I see every failure as a roadmap item.

And as the person deciding when to bring the agent into a client-facing space, I feel the trust boundary.

That boundary matters.

An internal bot can be experimental. It can be rough, corrected, and rerun. A client-facing agent has to earn its place. It earns it by being grounded, transparent, and useful in small moments before it can be trusted with larger ones.

The Future

This is what I expect agentic workflows to look like in professional services.

Not full autonomy.

Not one-shot answers.

Not magic.

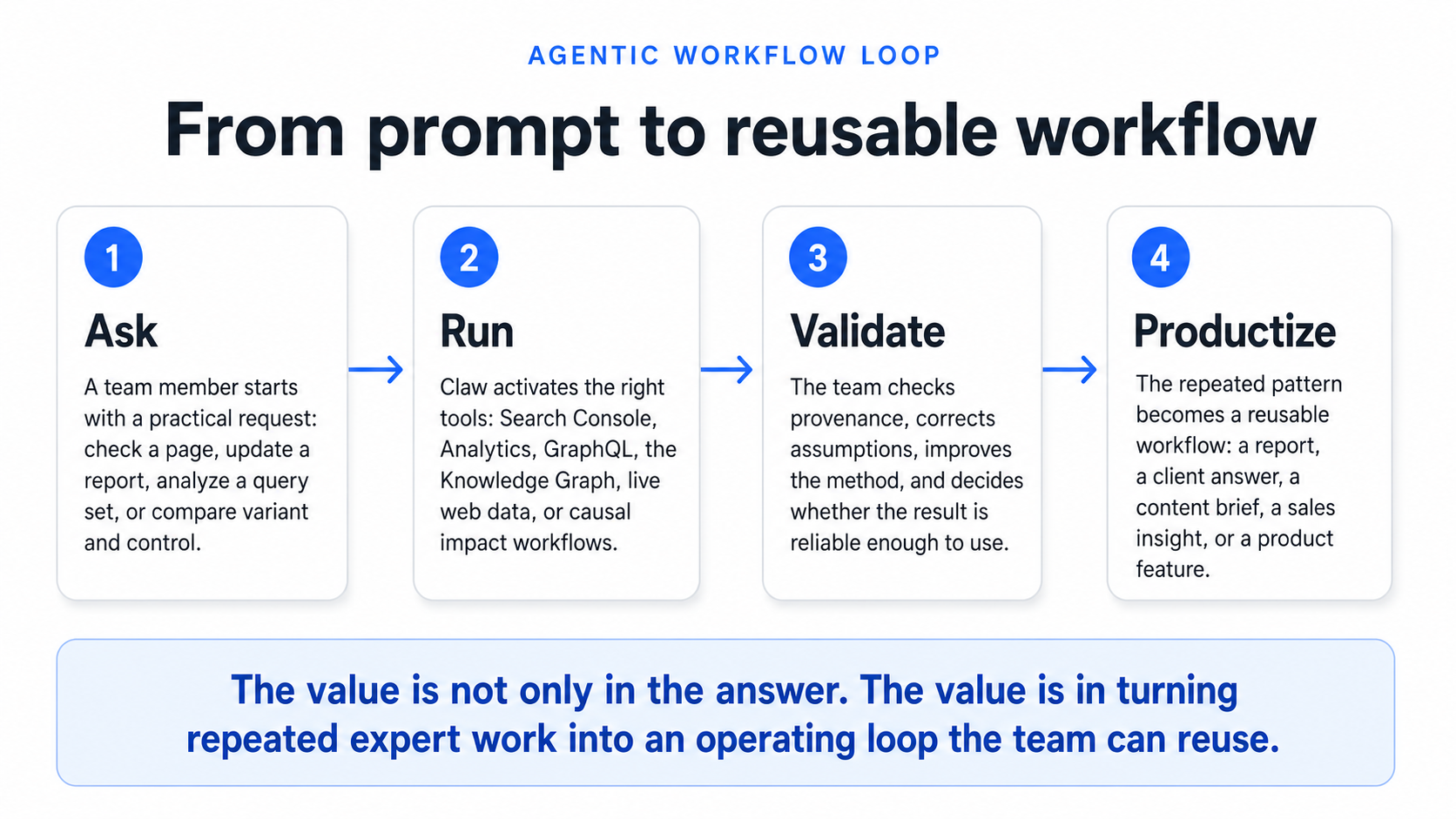

Instead: agents that start the work, humans that define the method, experts that validate the assumptions, systems that expose provenance, reports that can be regenerated, errors that become product feedback, and workflows that become reusable over time.

The agent will not replace the analyst.

It will change what the analyst spends time on.

Less time assembling the first draft.

More time validating the design.

Less time searching for data.

More time checking whether the comparison is meaningful.

Less time making charts manually.

More time deciding what the chart proves.

And this is where Claw becomes more than an internal experiment.

It becomes a way to productize the operating layer around SEO: the questions, the data sources, the validation steps, the client-facing reports, and the repeatable workflows that usually live only in people’s heads.

Final Thought

The most important thing I am learning from WordLift Claw Bot 🦞 is not that AI agents can automate SEO tasks.

It is that they change the anthropology of work.

People begin to ask differently.

They begin to delegate differently.

They begin to challenge outputs differently.

They begin to encode their expertise into prompts, corrections, and reruns.

The agent becomes a mirror of the organization.

It shows where work is repetitive.

Where trust is fragile.

Where data provenance matters.

Where experts are essential.

Where the product is still weak.

Where workflows should become infrastructure.

This is why the messy Slack threads matter.

They are not just logs.

They are the early field notes of agentic work.

And, for us, they are also the blueprint for what we are building: Claw as the agentic operating layer for SEO teams.